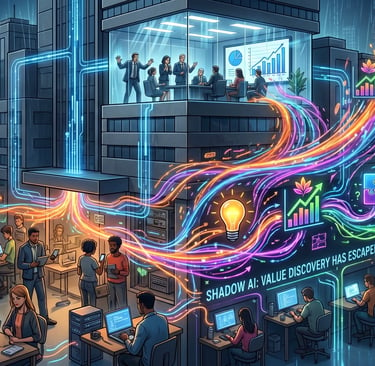

Shadow AI: Value Discovery Has Escaped the Hierarchy

Shadow AI is what happens when employees discover where AI creates real value before the hierarchy has approved the roadmap. In that sense, value discovery has escaped the hierarchy. Most official AI programmes are still run like centrally planned economies; shadow AI behaves more like market discovery. The leadership challenge is not to crush that signal, but to detect it, sanction it, and systemise it — because the edge discovers, and the centre enables.

4/16/20266 min read

Most firms are misreading shadow AI. It is not just a compliance problem; it is a signal that employees are already discovering where AI creates value before leadership has caught up. IBM defines shadow AI as the unsanctioned use of AI tools without formal IT approval or oversight. The more important point is what that behaviour reveals: AI is shifting value discovery away from the centre of the organisation and into the flow of work itself.

What shadow AI actually is

Shadow AI is the unofficial use of AI for work: a marketer using a personal ChatGPT account to draft copy, an analyst using an external model to speed up synthesis, a manager using Claude to structure a board update, or a recruiter using an unapproved tool to summarise CVs. IBM’s definition is simple and useful: it is AI use without formal IT approval or oversight. IBM also highlights why companies worry about it: sensitive data can leak, regulatory exposure can increase, and reputational damage can follow.

That risk story is real, but it is not the whole story. IBM also notes that employees turn to shadow AI to increase productivity, accelerate innovation, and bypass operational inefficiencies. That is the clue most leadership teams are still missing. People are not reaching for these tools because they want to subvert the company. They are reaching for them because the tools help them do the work.

We are treating a signal like a violation

Most organisations still interpret shadow AI as a governance glitch. The logic is familiar: if people are using unapproved tools, the answer must be more control, more approvals, more review, and slower access. But that response assumes the main problem is employee behaviour. It often is not.

The more interesting problem is organisational lag. Employees are discovering useful applications faster than the institution can approve them. A 2025 report on the “shadow AI economy” found that only 40% of surveyed companies had purchased an official LLM subscription, while workers from more than 90% of those companies reported regular use of personal AI tools for work. In other words, the workforce had already moved before the formal programme had.

That is why shadow AI matters. It is not just unsanctioned usage. It is revealed demand.

The old model assumed the centre would find the value

For years, large organisations have operated with an implicit theory of innovation: the centre identifies the opportunity, leadership sets the priorities, committees define the use cases, pilots get approved, and the business adopts what has been designed for it. That is a form of central planning.

And for many earlier technologies, it was workable. The tools were expensive, specialised, and hard to test informally. The hierarchy could plausibly claim a monopoly on deciding where value lived.

AI breaks that sequence. Generative AI is cheap to try, easy to access, and immediately useful in day-to-day knowledge work. A person can test it against a real task in minutes. A team can start adapting it to a workflow long before a steering group has scheduled its first workshop. That changes the mechanism of discovery. Value no longer has to be found centrally and distributed outward; it can be discovered locally, experimentally, and in real time by people closest to the work.

Shadow AI is what market discovery looks like inside a firm

This is the best analogy I have found for what is happening.

Most official AI programmes are still run like centrally planned economies. The centre decides which use cases are legitimate, where resources should go, and when adoption may begin.

But shadow AI behaves more like market discovery.

People closest to the work are running thousands of tiny experiments. They are discovering where AI saves time, where it improves output, where it removes friction, and where existing systems are failing them. They are generating signals that no committee could surface as quickly or as honestly. Seen this way, shadow AI is not just misconduct. It is an internal price signal. It tells leadership where demand is real. The gap between 40% official subscription coverage and 90%+ worker usage is not random misuse; it is evidence that the organisation’s formal response is lagging behind real need.

This is the deeper meaning of the phrase: value discovery has escaped the hierarchy.

The economics make this hard to dismiss

This is not just a cultural observation. There is measurable productivity underneath it.

The St. Louis Fed found that 28% of workers used generative AI at work to some degree in 2024, and 21.8% had used it in the previous week. Among users, average time savings were 5.4% of work hours, about 2.2 hours per week for a 40-hour worker. The authors estimate that workers are about 33% more productive in each hour they use generative AI. Crucially, they also note that worker usage was running ahead of formal firm adoption, meaning much of the gain was being generated informally rather than through official enterprise rollouts.

Once that happens, the old top-down innovation logic starts to wobble. The question is no longer, “How do we get people to adopt AI?” The real question is, “How do we recognize, govern, and scale the value they are already discovering without losing control of the risks?”

The biggest mistake leaders make is treating the signal as the problem

Of course shadow AI carries real exposure. IBM is right to call out data leakage, compliance risk, and unmanaged use of sensitive information.

But the bigger strategic mistake is to look at shadow AI and see only the danger.

When leaders interpret it purely as non-compliance, their instinct is suppression: tighten access, add approvals, slow experimentation, and force all activity back into formal channels. That may create the appearance of control, but it does not remove the underlying demand. It simply drives the behaviour underground.

And once that happens, the company gets the worst of both worlds: real usage without visibility, real risk without guardrails, and real learning without institutional capture. If employees are already using AI to work around friction, then they are telling you something important about your workflows, systems, and operating model. They are showing you where the work is broken, where the bottlenecks are, and where leverage is easiest to unlock.

A better model: Signal, Sanction, Systemise

This is where most firms need a new operating logic.

Not top-down invention of use cases. Not pilot theatre. Not abstract policy first.

A better model is: Signal, Sanction, Systemise.

Signal

Start by treating shadow AI as intelligence, not just infraction. Find where unofficial AI use already exists. Map the recurring workflows where people are using it. Identify the tasks that are high-frequency, high-friction, text-heavy, decision-heavy, or time-draining. Look for repeated patterns of time saved, better synthesis, faster output, or reduced cognitive load. The point is to detect where the business is already voting with its behaviour. The 40% versus 90% gap tells you those signals are already there in most organisations.

Sanction

Then move fast to provide a sanctioned route that is good enough to compete with consumer tools. This is where the centre still matters. It needs to provide approved environments, clear ownership, practical safe-use rules, and human accountability. The useful process language here is not “shut it down,” but “make the good behaviour governable.” Some modern AI workflow programmes get this right by focusing on workflow design, prompt frameworks, task-level playbooks, ethical guardrails, and validation loops rather than generic tool training. That is the right instinct.

Systemise

Finally, turn repeated wins into operating assets. Build playbooks, reusable prompts, workflow templates, role-specific assistants where appropriate, and short feedback loops to refine what works. Update the workflow, not just the policy. The goal is to convert scattered individual experimentation into institutional capability. Again, some of the better practical models in the market now emphasize mapped use cases, clear ownership, workflow frameworks, playbooks, and ongoing optimisation rather than one-off training.

That is what I mean by bottom-up in discovery, top-down in enablement, and disciplined in governance.

The centre still matters, but its job changes

This is not anti-management. It is not an argument for chaos. It is not an argument that every employee should plug random models into critical workflows and hope for the best.

Hierarchy still matters enormously for trust, accountability, risk, prioritisation, and scale. But it can no longer pretend to be the sole origin of insight.

Its role is changing from discovering all the value itself to recognizing, validating, protecting, and scaling what the edges are already proving. Put simply: the edge discovers; the centre enables. That is a more realistic management model for the AI era than the old belief that leadership must find every use case first and distribute it downward through a formal programme.

The real leadership challenge

This is why shadow AI is such an important story.

It is not really about unauthorised tool use. It is about how AI changes the mechanism by which firms discover value.

For years, most organisations assumed the centre would find the opportunities and the rest of the business would execute. AI flips that. The people closest to the work are now often the first to discover what matters. The hierarchy sees it later.

That is the deeper meaning of shadow AI.

Value discovery has escaped the hierarchy.

The firms that win will not be the ones with the longest policy documents or the slowest approvals. They will be the ones that can read bottom-up experimentation for what it is: messy, risky, imperfect, but incredibly valuable intelligence about where the business is trying to go next.

vibe MKTG Ltd is incorporated in England and Wales under company number 13728564.

vibe MKTG® is a registered trademark. All rights reserved.

Site designed by: AI Conductor + ChatGPT.